Key Points

- Choosing between tape and cloud backup should be based on restore performance, reliability, and total recovery cost, not just raw storage price.

- A one-week restore readiness pilot helps MSPs and IT teams test real-world restore outcomes, including recovery speed, data integrity, and operator effort.

- Standardize your test environment, run timed full and partial restores, and validate data integrity to objectively compare each medium.

- Calculate true restore costs, including egress fees, labor, and handling, to reveal total recovery expenses for tape, VTL, and cloud backups.

- Map risk controls, such as immutability, encryption, and air-gapped isolation, to align backup tiers with compliance and ransomware resilience.

- Use NinjaOne to automate restore validation, reporting, and evidence collection, simplifying documentation and QBR presentations across clients.

Choosing between tape vs. cloud backup isn’t just about storage; it’s about recovery speed, reliability, and alignment with your workload. This guide’s one-week restore readiness pilot helps you test both options and see which truly fits your client’s recovery objectives.

Tape vs. cloud backup: A 10-step guide to find the best fit

Picking the right backup medium can make or break your backup and disaster recovery strategy. The following steps provide you with data-backed insights on whether tape or cloud backup performs best according to your clients’ real-world recovery needs.

📌Prerequisites:

- Existing representative dataset for testing

- Access to cloud-managed and tape backups

- Defined RPO and RTO targets, including maximum allowable recovery points

- Test hosts, network constraints, and an evidence repository

- Named owners for the pilot, change approvals, and a rollback plan

Step #1: Select sample datasets and define your acceptance criteria

Before starting any restore pilot test, it’s important to first establish what you’re restoring and what success looks like. Begin by selecting two contrasting data sets; for instance, one operational system, such as file servers, and one archival system that handles long-term data.

For each dataset, define the following key performance benchmarks:

- Recovery objectives (RTO and RPO):Define how quickly data restoration should be (RTO), and how much data you can afford to lose per dataset (RPO).

- Governance or security requirements:Verify if the datasets you have require immutability, encryption, or physical air-gap assurance.

- Success metrics: Define what success looks like, including acceptable restore times and data validation checks to confirm backup integrity.

Step #2: Standardize the test environment to ensure impartial results

Uncontrolled variables can accidentally mix with your datasets, rendering your pilot test results skewed. To avoid this, standardize your test environment by using identical restore hardware, maintaining consistent system configurations, and standardizing antivirus and network policies.

For cloud restores, cap your network throughput to the same limits used in tape-based tests. For tape backups, confirm that the host path, drivers, and firmware are identical across runs.

Document your environment parameters, including CPU, RAM, disk type, bandwidth, and background processes. This ensures that any differences in outcome can be attributed to the backup method used, rather than to environmental factors.

Step #3: Measure the prep time of data backup and restore workflows

Measuring the time between preparation and actual recovery reveals hidden operational delays that impact recovery speed. Through this, you’ll see how responsive and automated your platform is, and where technician effort or slowdowns drive up costs.

Check repositories, logins, and indexes in cloud backups to make sure your data’s ready before testing. For tape, load the media, locate the right files, and confirm you’re restoring the correct backup set.

Record manual interventions and idle wait times required to begin the restoration process itself. These metrics reveal the amount of time and human effort invested before the first byte is transmitted.

Step #4: Run separate timed full restores for cloud and tape backups

After establishing datasets and standardizing your test environments, conduct timed full restores using both tape and cloud backup methods. Record its start and end times, monitor throughput trends, and document any retry events or bottlenecks you encounter along the way.

This step provides you with the actual performance of tape vs. cloud backup solutions under real-world recovery conditions. Additionally, this shows how well each solution handles retries, corruption checks, and throughput stability. Capture screenshots, logs, and metrics as supporting evidence to build client confidence in recovery outcomes through documented proof.

Step #5: Validate the integrity of recovered cloud and tape backup data

The value of backups lies in their ability to completely recover data without compromising their integrity and usability. Start your backup validation strategy by running checksum and hash comparisons on recovered representative datasets to ensure their integrity after recovery.

Afterward, open a subset of critical files, run lightweight queries on databases, and confirm that key applications can read recovered content. Capture corruptions, mismatches, or errors, and preserve them inside your evidence repository.

Step #6: Test small-scale and snapshot restores for tape vs. cloud backups

Real-world incidents often leverage granular recoveries, such as retrieving a single folder, restoring a database, or reverting to a previous version, rather than performing a full restore. That said, it’s crucial to test the capacity of your tape and cloud backup systems in handling smaller, targeted restores.

Run small-scale restore tests using a single folder, file set, or snapshot from a chosen time. Track the duration of recovery and note any workflow delays, such as additional steps, operator approvals, or unclear version labels.

Step #7: Calculate the cost to store and restore tape and cloud backups

Many organizations focus on low storage costs but overlook the true expense of large-scale recovery. Measuring total restore costs helps balance budget and performance, while avoiding low-cost backups that become costly and time-consuming to restore.

For cloud-based backups, include egress fees, API call costs, and any temporary storage or compute capacity leveraged during recovery workflows. On the other hand, consider media handling, offsite retrieval costs, and technician labor when computing your tape backup costs.

Step #8: Map risk controls when comparing tape vs. cloud backup tiers

Mapping risk controls helps you determine which backup tier and method best align with your recovery needs. Start by listing the available safeguards for both cloud and tape backups.

For instance, cloud backup solutions may include encryption in transit and at rest, access logging, MFA, and automated versioning. Meanwhile, tape or virtual tape libraries (VTLs) can provide air-tight backups, write-once immutability, and offline isolation.

Once these safeguards are documented, compare the strengths of both backup mediums. For example, tape backups offer better offline isolation, while cloud backups offer easier access and automated recovery. This helps you see how well each backup medium covers your backup strategy requirements.

Step #9: Compare cloud and tape backup for each dataset

After testing the performance, cost, and risk controls of cloud and tape backups, compare them using a simple scoring matrix. This allows you to determine which backup medium is best suited for each representative dataset.

List key metrics, such as restore speed, data integrity, operator effort, restore cost, and control coverage, then rank them by importance. Score both backup media, cloud vs. tape backup, based on their performance on each listed metric.

After scoring, make a decision per dataset; for example:

- Leverage cloud-managed backup for operational data that requires rapid restores and seamless access.

- Utilize tape or VTL for archival or compliance data, where long-term retention and offline isolation are preferred.

- Use both cloud and VTL backups, but with defined retention boundaries to avoid overlap and cost creep.

Step #10: Capture evidence to support your decision to stakeholders

After testing and comparing cloud and tape backups, document your findings in a clear, concise, and repeatable format. A consistent evidence packet not only standardizes future pilots but also streamlines trend tracking and cross-client comparisons.

Create a one-page report that highlights restore times, data integrity, operator effort, and total restore costs. Include a short analysis of what worked, what slowed recovery, and what improvements you recommend. Present evidence during QBRs in a client-facing format to show measurable value, build trust, and reinforce your role as a proactive partner within backup strategies.

Automation touchpoint workflows for restore readiness pilots

Incorporating automation in restore readiness pilots improves result consistency, speeds up data collection, streamlines reporting workflows, and reduces manual technician effort.

Below are some examples:

- Use a scripted harness to launch each restore and automatically sample throughput every minute.

- Run checksum verification on a fixed manifest to confirm data integrity.

- Export results to a CSV file, including timing, errors, and operator prompts.

- Generate automated charts and a decision table for quick inclusion in the evidence packet.

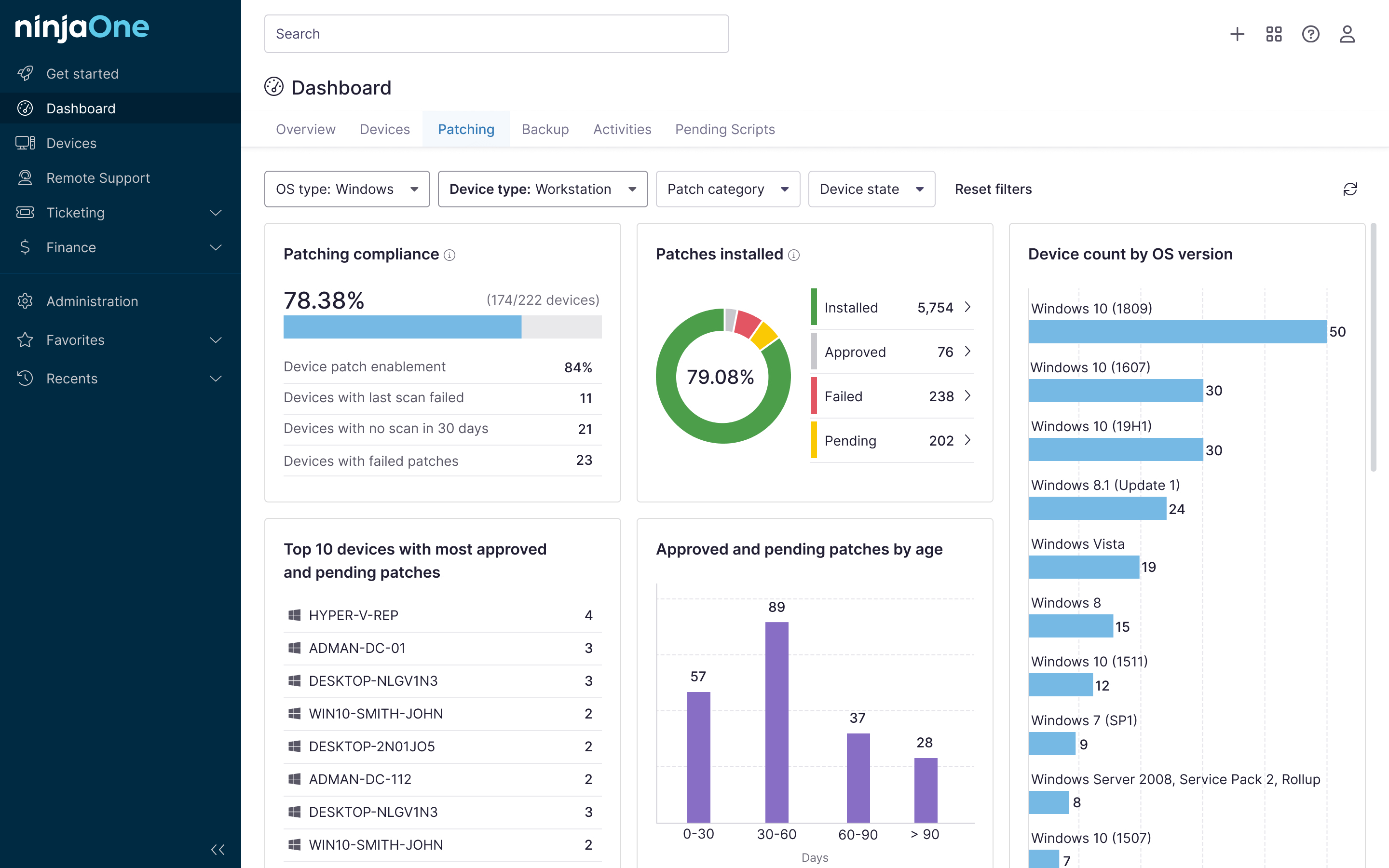

Use NinjaOne when choosing between cloud vs. tape backups

Integrating NinjaOne into your restore readiness pilot helps you automate evidence collection and report creation. Additionally, its tagging and documentation tools simplify metrics tracking, validation, and QBR workflows.

- Scheduled automation: Create scheduled automations to retrieve and restore job timings and logs from backup tests, with the option to collect logs daily, weekly, or monthly.

- Device tagging: Use custom fields to identify devices that host or source test data to filter and track which devices have undergone restores and which are pending. Additionally, tagging streamlines the identification of devices and clients included in the restore readiness pilot.

- Centralized documentation: Leverage NinjaOne Documentation to store evidence packets, checklists, and test findings within a centralized and secure repository.

- Automated reporting tool: Use NinjaOne’s reporting features to build custom reports, including restore timings and relevant backup metrics.

Conduct restore readiness pilots when choosing a backup

Cloud and tape backups have their own strengths and weaknesses. Conducting a restore readiness pilot reveals which backup medium best fits your strategy and recovery objectives.

When choosing between cloud vs. tape backups, compare outcomes, such as recovery speed, backup integrity, and total costs. With NinjaOne, you can automate validation, reporting, and evidence collection to support test workflows.

Related topics: