Key Points

- Linux environments increase backup complexity because diverse filesystems, workloads, and deployment models require tailored Linux backup strategies rather than standardized approaches.

- Most Linux data loss is caused by operational issues such as misconfiguration, permission errors, and human mistakes rather than external attacks or platform instability.

- Flexible Linux backup strategies matter more than specific tools, since snapshots, file-level backups, automation, and schedules must match how workloads behave and how data needs to be restored.

- Restore readiness is critical for Linux backup strategies because untested backups create false confidence and often fail during real recovery scenarios.

- Poorly designed Linux backup strategies can cause outages when backup jobs affect performance, conflict with system activity, or restore data with incorrect permissions.

Linux systems run critical workloads across servers, cloud platforms, containers, and infrastructure services. Despite Linux’s reputation for stability and control, data loss is common due to administrative mistakes, configuration changes, and gaps in backup planning.

Unlike standardized desktop platforms, Linux environments vary widely in filesystem behavior, workload design, and access models. This guide explains why flexible Linux backup strategies are required to account for this variability, avoid silent failures, and reduce the risk of permanent data loss.

Understanding Linux-specific data loss risks

Linux is widely known for stability and reliability, but that does not remove the risk of data loss. Like any system, Linux can fail. In most cases though, data loss is not caused by platform flaws but by operational and environmental issues.

Data loss on Linux systems most often occurs due to:

- Accidental file or directory deletion

- Permission and ownership misconfiguration

- Failed updates or package conflicts

- Filesystem corruption or disk failure

- Application- or service-level errors

Because Linux offers extensive administrative freedom, data protection depends largely on system design choices rather than built-in safeguards. Without intentional backup planning, routine actions can lead to permanent data loss.

Why flexibility matters in Linux backup strategies

Linux environments rarely look the same from one system to another. Differences in system design, storage technologies, and workloads mean backup strategies need to adapt rather than stay fixed.

Backup planning should account for:

- Filesystem differences, such as ext4, XFS, Btrfs, or ZFS, each with distinct snapshot and recovery capabilities

- Workload diversity, including databases, containers, and file servers, all of which handle data consistency differently

- Deployment models, spanning bare metal servers, virtual machines, and cloud-based instances

As Linux systems evolve, rigid backup approaches increase the risk of incomplete coverage, extended recovery times, and operational disruption.

Common backup approaches in Linux environments

Linux backup strategies rarely rely on just one method. Most environments use a mix of approaches to protect different types of data while meeting recovery needs and operational limits.

Common Linux backup approaches include:

- File-level backups, which allow recovery of individual files and directories, such as user data and configuration files

- Snapshot-based backups, which create consistent, point-in-time copies of filesystems or volumes with minimal system disruption

- Application-aware backups, required for databases and other stateful services to maintain data consistency during backup operations

- Hybrid approaches, which combine multiple techniques to balance performance, storage efficiency, and recovery flexibility

Backup methods should be chosen based on recovery needs, including how quickly data must be restored, how consistent it needs to be, and how much of the system must be recovered, rather than convenience or familiarity with specific tools.

Restore readiness and validation

Backups only matter if they can be restored when something goes wrong. Linux backup strategies need to go beyond collecting data and include regular testing, validation, and clearly documented recovery steps. Effective restore readiness should include:

- Regular restore testing to confirm backups can be recovered within acceptable timeframes

- Validation of permissions and ownership to ensure restored files and services function correctly

- Documented recovery workflows that support repeatable and predictable responses during incidents

- Verification across multiple failure scenarios, including accidental deletion, system corruption, and full system loss

Remember, untested backups are assumptions, not safeguards.

Operational considerations

Backup strategies need to work in real-world conditions, not just on paper. Effective backups fit smoothly into daily operations and behave predictably during both normal use and system failures.

Key operational considerations for Linux backup strategies include:

- Impact on production performance, making sure backups don’t slow down or interrupt critical services

- Scheduling alignment, so backups don’t clash with maintenance tasks, updates, or peak usage periods

- Preservation of permissions and access controls, ensuring restored data works as expected

- Recovery complexity during incidents, keeping restore steps simple, and reducing guesswork under pressure

Operational awareness helps prevent backup processes from becoming a source of instability instead of a layer of protection.

Common failure patterns to evaluate

Backup problems rarely show up as sudden, obvious failures. More often, they appear as repeated issues that point to deeper design or operational gaps. The following patterns commonly surface in Linux backup environments:

Backup exists but data is missing

Usually caused by excluded paths, permission limitations, or an incorrectly defined backup scope.

Restores fail during incidents

Often a sign that restore testing is incomplete, or recovery steps are undocumented or unclear.

Backup impacts system performance

Typically indicates poor snapshot timing or backup schedules that conflict with production workloads.

Security concerns around backup access

Points to overly broad permissions or backup endpoints that are insufficiently protected.

These patterns highlight the gap between backup intent and operational reality and signal where backup strategies need adjustment before failures occur.

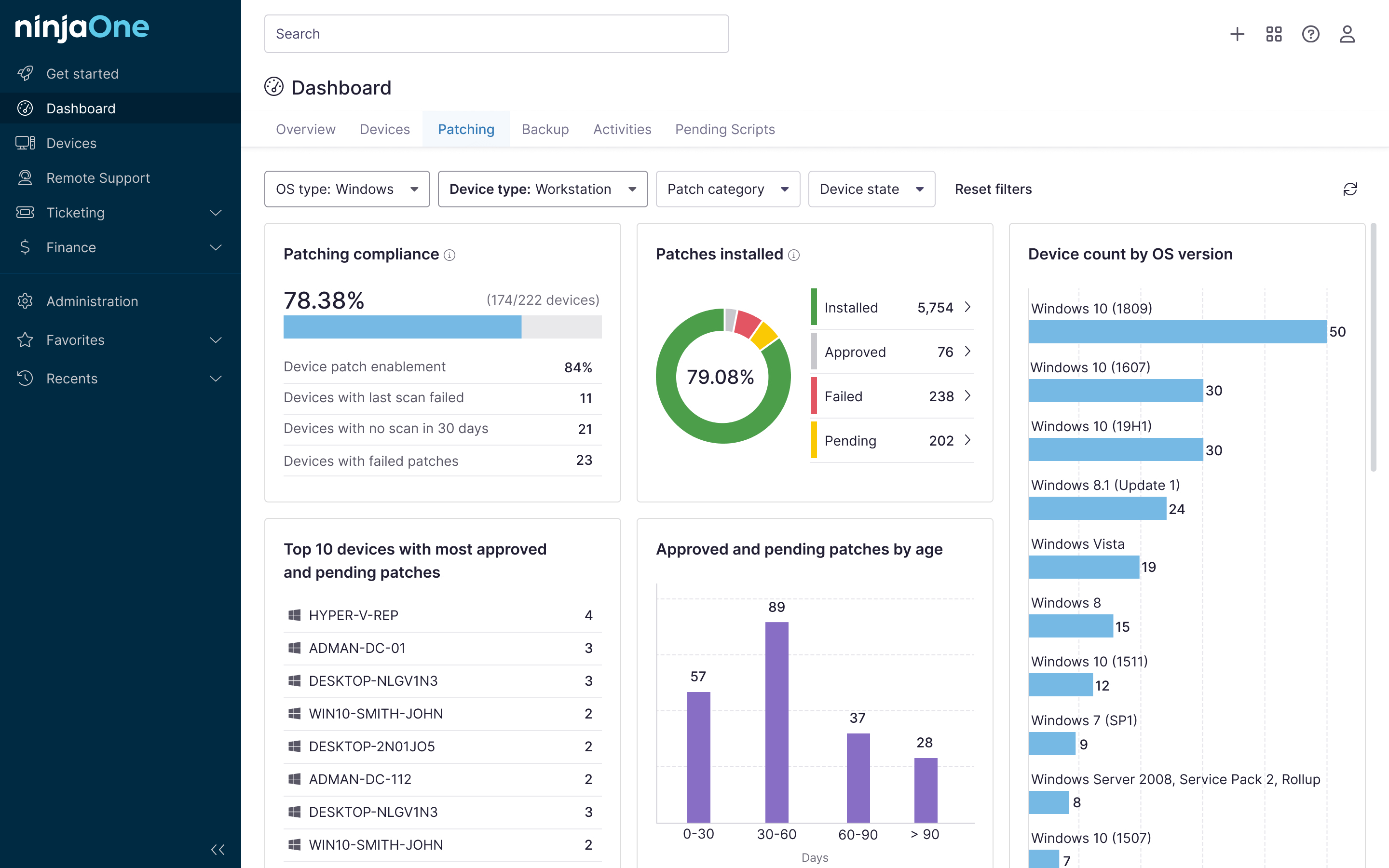

NinjaOne integration

NinjaOne supports Linux backup operations by improving visibility, consistency, and restore readiness across distributed environments. Instead of replacing existing backup tools, it helps teams manage backup reliability as part of day-to-day operations. Here’s how:

| NinjaOne capability | How it helps |

| Centralized visibility | Provides a single view of backup status across Linux systems, making it easier to spot failures or gaps that might otherwise go unnoticed |

| Monitoring and alerting | Detects failed or stalled backup processes early, allowing teams to address issues before recovery is needed |

| Policy-driven management | Supports consistent backup-related operational practices, helping reduce configuration drift across systems and environments |

| System health correlation | Highlights performance or resource issues that could affect backup reliability or restore readiness, such as disk pressure or system load |

Building resilient Linux backup strategies for evolving environments

Protecting Linux systems from data loss requires backup strategies that stay flexible, workload-aware, and regularly tested. Effective protection starts with understanding Linux-specific risks, continues with choosing backup approaches based on recovery needs, and depends on consistent testing and operational discipline.

When backups are designed for recovery and validated before failures occur, Linux environments remain resilient as systems, workloads, and infrastructure change.

Related topics: